Part 3 - Building Your AI-Ready Data Stack: Data ingestion design pattern

10-part series about building your first Data stack from 0 to 1, and be ready for AI implementation.

Hello readers,

Welcome to the Part 3 of Building your data stack ready for AI. Last time we talked about technical design for our data architecture. I got quite a few questions around data ingestion pipelines and design patterns so in this part we will deep dive into types of data ingestion design patterns.

In this article, we'll explore the most common data ingestion design patterns, discussing what they are, why you might use them, and how to implement them. We'll cover:

Batch Ingestion

Real-Time/Streaming Ingestion

Change Data Capture (CDC)

Lambda Architecture

Kappa Architecture

Let’s dive right in!

What is Data Ingestion

Data ingestion is the process of importing data from various sources into a data store for further processing and analysis. It's a critical component of any data platform, as it lays the foundation for all subsequent data operations and insights.

Choosing the right data ingestion approach is crucial for the success of your AI and analytics initiatives. Remember, whether it’s Analytics dashboards, reports or AI model, all depends on trusted, accurate and quality data in the system. henceforth, selecting right pattern for the right job is very crucial.

The ideal pattern will depend on factors such as data volume, velocity, variety, and the specific requirements of your use case.

For each pattern, we'll provide pros and cons, as well as suggestions for open-source, cloud-specific, and cloud-agnostic tools to help you get started.

Pattern 1: Batch Ingestion

Batch ingestion involves periodically collecting and importing large volumes of data into a data store. The data is typically processed in batches at scheduled intervals (e.g., hourly, daily, weekly).

Why use it?

Batch ingestion is ideal for:

Large datasets that don't require immediate processing

Historical data analysis and reporting

Workloads with predictable and manageable data volumes

Cost-sensitive use cases (batch is generally cheaper than real-time)

How to implement it?

A typical batch ingestion pipeline includes the following steps:

Extract data from sources (databases, files, APIs)

Transform data as needed (cleaning, normalization, aggregation)

Load data into target data store (data warehouse, data lake)

Tools for batch ingestion:

Open-source: Apache Sqoop, Apache Flume, Apache NiFi

Cloud-specific: AWS Glue, Azure Data Factory, Google Cloud Dataflow

Pros:

Simple and well-understood architecture

Cost-effective for large data volumes

Supports complex data transformations

Wide ecosystem of tools and frameworks

Cons:

Not suitable for real-time or near-real-time use cases

May lead to stale data if update frequency is low

Can be resource-intensive and time-consuming for very large datasets

Pattern 2: Real-Time/Streaming Ingestion

Real-time or streaming ingestion involves continuously capturing and processing data as it is generated. Data is typically ingested in small, individual records or micro-batches and made available for immediate consumption.

Why use it?

Real-time ingestion is ideal for:

Use cases that require immediate data processing and analysis

Handling high-velocity data from sources like sensors, clickstreams, and logs

Enabling real-time decision-making and actions

Powering real-time dashboards and alerting systems

How to implement it?

A real-time ingestion pipeline typically includes the following components:

Message queue or log to capture streaming data (Kafka, Kinesis, Pub/Sub)

Stream processing engine to transform and enrich data (Spark Streaming, Flink, Storm)

Real-time data store to serve processed data (Cassandra, HBase, Elasticsearch)

Tools for real-time ingestion:

Open-source: Apache Kafka, Apache Pulsar, RabbitMQ

Cloud-specific: AWS Kinesis, Azure Event Hubs, Google Cloud Pub/Sub

Fully managed: Confluent for Kafka, StreamNative for Pulsar, CloudAMQP

Pros:

Enables real-time insights and actions

Handles high-velocity and high-volume data

Supports complex event processing and analytics

Integrates well with modern, distributed architectures

Cons:

More complex to set up and manage than batch

Requires specialized skills and tools for stream processing

Can be more expensive than batch for equivalent data volumes

May be overkill for use cases that don't require real-time processing

Pattern 3: Change Data Capture (CDC)

Change Data Capture (CDC) is a design pattern that captures incremental changes made to a database and applies them to a target data store in near real-time. CDC uses transaction logs or triggers to identify and capture changes. Key feature of CDC is it applies directly to database events (not system events, application events, log events etc)

CDC is ideal for:

Synchronizing data between transactional and analytical systems

Enabling real-time analytics on operational data

Feeding real-time dashboards and applications with up-to-date data

Replicating data for disaster recovery and high availability

How to implement it?

A CDC pipeline typically includes the following components:

Source database with CDC enabled (e.g., MySQL Postgres, Oracle GoldenGate)

CDC tool to capture and transmit changes (Debezium, Estuary)

Target data store to apply changes (data warehouse, data lake, NoSQL database)

Tools for CDC:

Cloud-specific: AWS Database Migration Service (DMS), Azure Data Factory, Google Cloud Datastream

Cloud-agnostic: Estuary

Pros:

Enables near real-time data synchronization

Minimizes impact on source systems (no expensive queries)

Supports heterogeneous source and target systems

Integrates well with existing data pipelines and architectures

Cons:

Requires enabling CDC on source databases (may need DBA involvement)

Can be complex to set up and manage, especially for multiple sources

May increase load on source systems and networks

Some CDC tools can be expensive for high-volume workloads

Pattern 4: Lambda Architecture

The Lambda Architecture is a design pattern that combines batch and real-time processing to enable comprehensive and accurate data analysis. It consists of three layers: batch, speed, and serving.

Why use it?

The Lambda Architecture is ideal for:

Use cases that require both real-time and historical data analysis

Handling complex data transformations and aggregations

Ensuring data consistency and accuracy across batch and real-time views

Supporting ad-hoc queries and data exploration

How to implement it?

A Lambda Architecture includes the following components:

Batch layer: ingests and processes historical data (Hadoop, Spark)

Speed layer: ingests and processes real-time data (Spark Streaming, Flink)

Serving layer: combines batch and real-time views and serves data to applications (Cassandra, HBase)

Tools for Lambda Architecture:

Open-source: Apache Hadoop, Apache Spark, Apache Cassandra

Cloud-specific: AWS EMR, Azure HDInsight, Google Cloud Dataproc

Cloud-agnostic: Databricks

Pros:

Provides a comprehensive view of data (batch + real-time)

Supports complex data processing and analytics

Ensures data consistency and accuracy

Enables ad-hoc querying and data exploration

Cons:

Requires maintaining two separate processing pipelines (batch and speed)

Can be complex to set up and manage

May introduce latency due to batch processing

Requires more infrastructure and resources than simpler architectures

Pattern 5: Kappa Architecture

The Kappa Architecture is a simplification of the Lambda Architecture that uses a single, real-time processing pipeline for both real-time and historical data. It leverages stream processing and retains an immutable log of all data.

Why use it?

The Kappa Architecture is ideal for:

Use cases that primarily require real-time data processing

Simplifying data architecture by eliminating batch processing

Leveraging the power and flexibility of stream processing frameworks

Enabling faster data processing and analysis

How to implement it?

A Kappa Architecture includes the following components:

Message queue or log to capture all data (Kafka, Kinesis)

Stream processing engine to process and serve data (Spark Streaming, Flink)

Serving layer to store and expose processed data (Cassandra, HBase)

Tools for Kappa Architecture:

Open-source: Apache Kafka, Apache Flink, Apache Druid

Cloud-agnostic: Confluent Platform, Materialize

Pros:

Simpler architecture than Lambda (no separate batch and speed layers)

Enables faster data processing and analysis

Leverages the power and flexibility of stream processing

Supports time-travel and reprocessing of historical data

Cons:

Requires more upfront planning and design

May be more resource-intensive than batch processing for large historical datasets

Relies heavily on the performance and reliability of the message queue and stream processing engine

May require specialized skills and tools for stream processing

Choosing the Right Ingestion Pattern

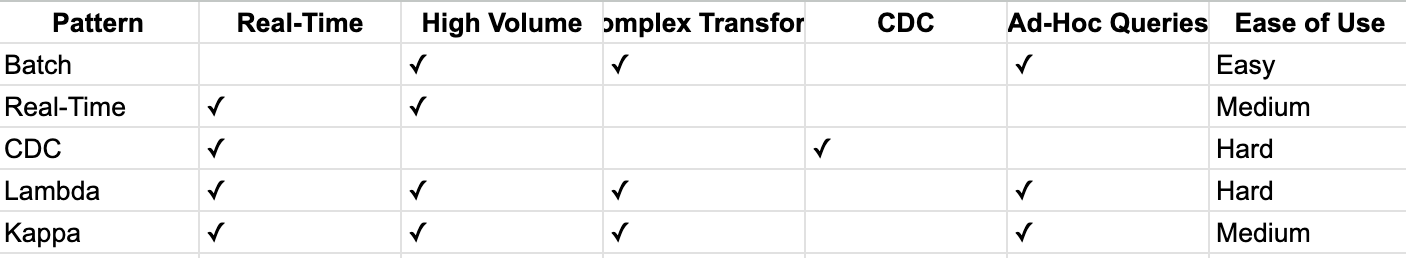

With several ingestion patterns available, each with its strengths and weaknesses, how do you choose the right one for your use case? Here's a comparison matrix to help guide your decision:

Some key factors to consider:

Data latency requirements (real-time vs. batch)

Data volume and velocity

Complexity of data transformations and analytics

Need for change data capture and data synchronization

Ad-hoc querying and data exploration needs

Technical skills and resources available

In general, start with the simplest pattern that meets your requirements and evolve your architecture as your needs grow and change.

Conclusion

Choosing the right data ingestion design pattern is a critical step in building a successful AI and analytics platform. By understanding the strengths and weaknesses of each pattern and aligning them with your specific use case and requirements, you can design an architecture that is scalable, reliable, and performant.

Whether you choose batch ingestion for large-scale historical analysis, real-time ingestion for immediate insights and actions, CDC for data synchronization, or a more advanced architecture like Lambda or Kappa, the key is to start with a clear understanding of your goals and constraints.

Remember, data ingestion is just one piece of the puzzle. As you design and implement your ingestion pipeline, keep in mind the broader context of your data platform, including data storage, processing, analysis, and governance.

By taking a holistic and iterative approach to data architecture, you can build a platform that not only ingests data efficiently but also turns that data into valuable insights and drives meaningful business outcomes.

Onwards!